The Claude Code local LLM hybrid setup is becoming an increasingly important approach for developers looking to balance performance, cost, and flexibility in modern AI-assisted coding environments. This approach combines cloud-based AI tools like Claude Code with locally hosted large language models (LLMs) to improve efficiency and reduce reliance on token-based usage.

As AI development tools continue to evolve, many users are exploring hybrid systems that allow them to run some tasks locally while reserving cloud resources for more complex operations. This shift is reshaping how developers interact with artificial intelligence in everyday workflows.

Growing Shift Toward Hybrid AI Development Systems

The Claude Code local LLM hybrid setup reflects a broader trend in AI usage where developers are combining cloud and local computing power. Instead of relying entirely on one system, users are now distributing workloads between different environments.

This approach is driven by several practical concerns:

- Rising cost of cloud-based AI usage

- Token consumption limits in subscription plans

- Desire for offline or low-latency processing

- Increased accessibility of local AI hardware

Why Developers Are Changing Their Workflow

Many developers report that even advanced cloud models can consume significant tokens during routine tasks. This has led to a search for more efficient alternatives that still maintain performance quality.

Key motivations include:

- Reducing dependency on paid API usage

- Improving control over AI workloads

- Increasing reliability during internet downtime

- Experimenting with self-hosted AI systems

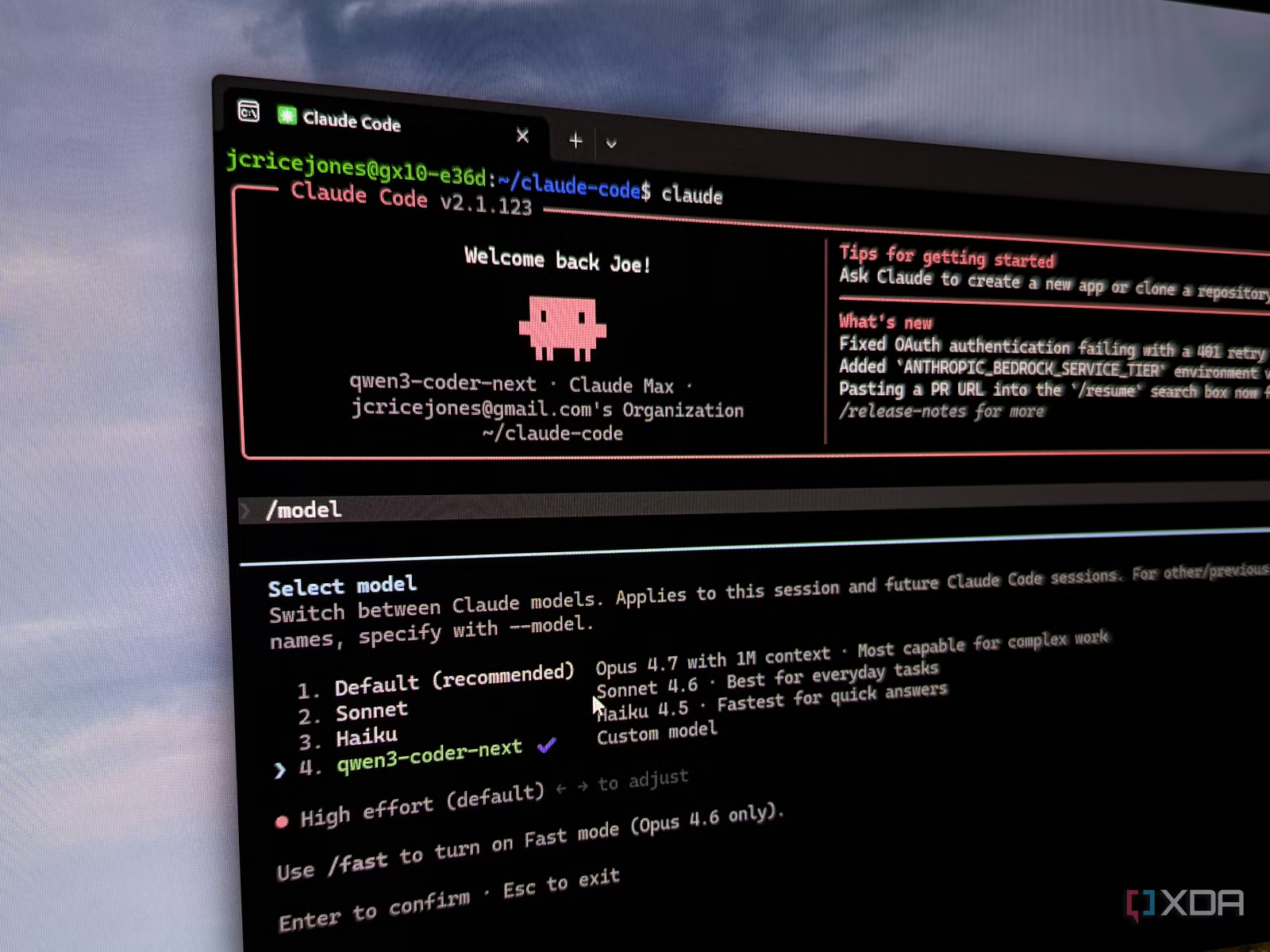

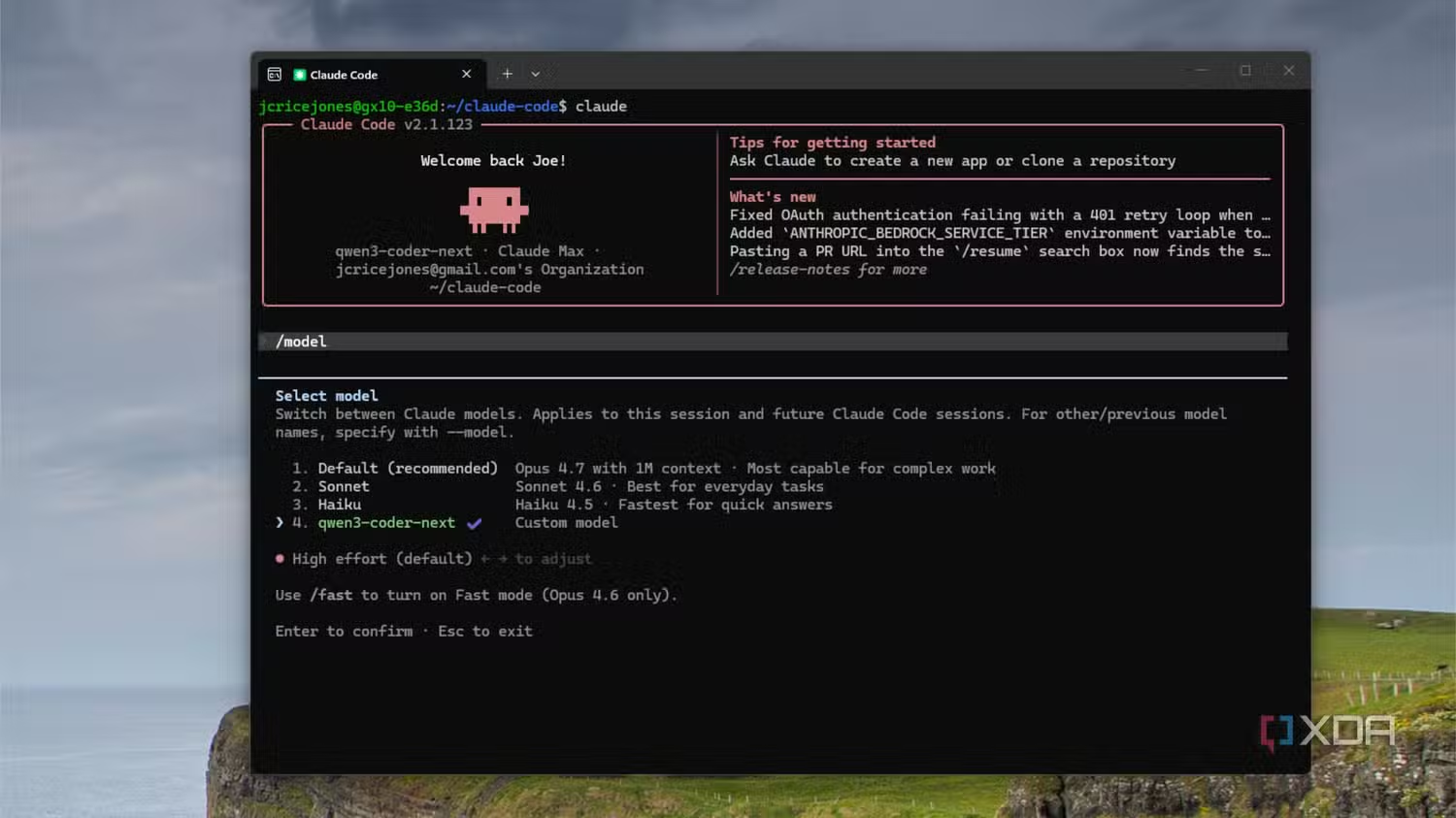

The Role of Claude Code in Modern AI Workflows

Claude Code has become a widely used tool for AI-assisted programming tasks. However, its cloud-based nature means usage is often tied to token limits and subscription tiers.

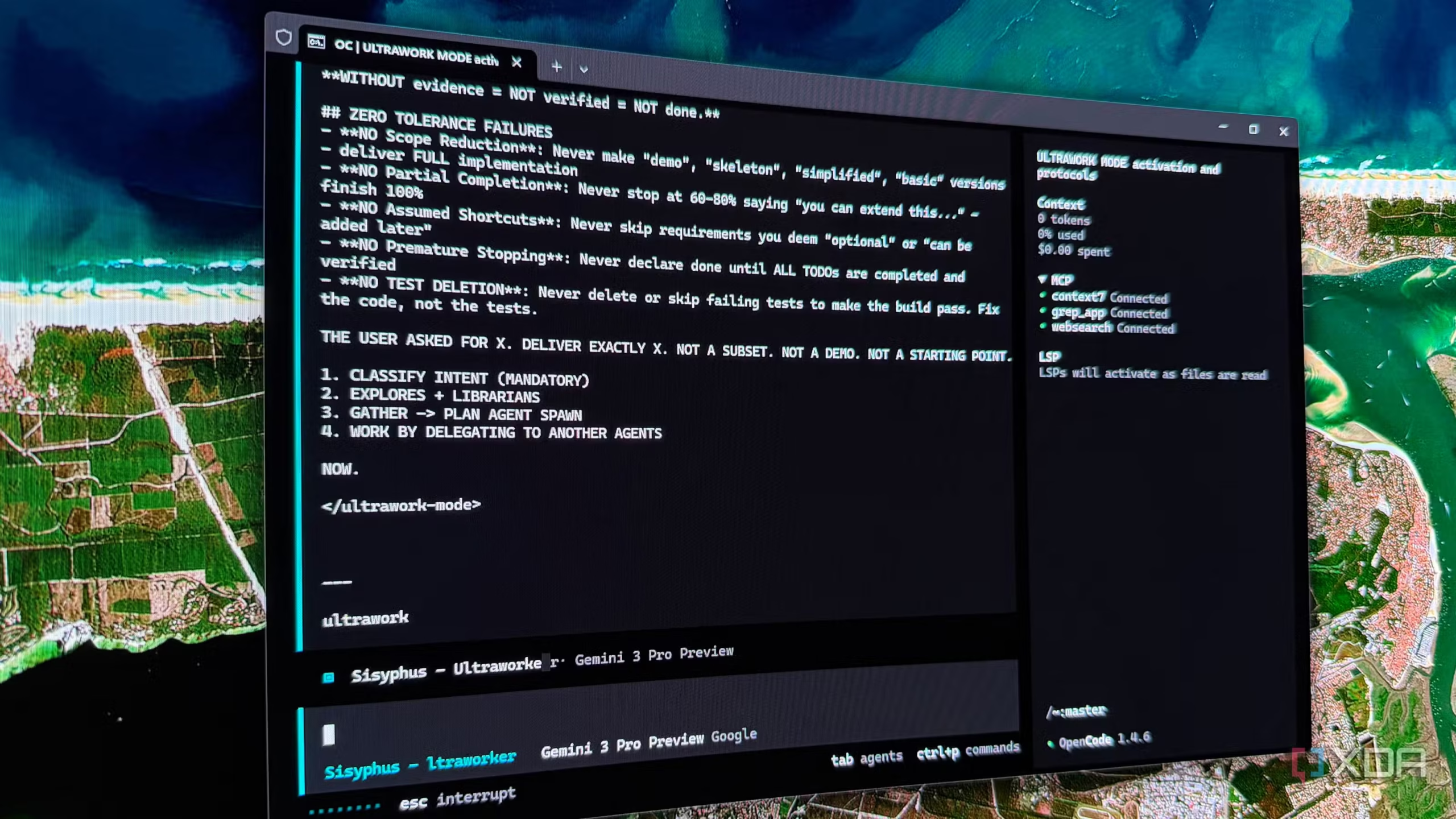

In a Claude Code local LLM hybrid setup, the tool is used strategically:

- Cloud AI handles complex reasoning and large tasks

- Local LLM handles smaller or repetitive operations

- Workload is balanced to optimize cost and performance

Rise of Local LLM Hosting in AI Development

The growing interest in Claude Code local LLM hybrid setup is closely tied to improvements in local model hosting technology. Developers are now able to run larger models on personal or business hardware, reducing dependence on cloud infrastructure.

Improvements in Hardware Capabilities

Modern AI hardware solutions are making local inference more practical. Devices such as advanced workstation GPUs and specialized AI systems are enabling users to run models that were previously limited to cloud environments.

Some key developments include:

- More powerful consumer-grade GPUs

- Dedicated AI accelerator systems

- Improved memory efficiency for large models

- Better optimization for open-source LLMs

Challenges of Traditional Local LLMs

Despite progress, local LLMs have historically faced limitations that slowed adoption.

Common limitations included:

- Smaller models with limited reasoning ability

- High hardware cost for large model deployment

- Complex setup and maintenance requirements

- Performance gaps compared to cloud models

These issues made full replacement of cloud systems unrealistic for most users.

Why Hybrid AI Setup Is Becoming Popular

The Claude Code local LLM hybrid setup is gaining attention because it addresses the weaknesses of both cloud and local AI systems while maximizing their strengths.

Balancing Cost and Performance

One of the biggest advantages of hybrid systems is cost optimization. Cloud AI usage is often based on tokens or usage tiers, which can become expensive for heavy users.

A hybrid approach allows:

- Reduced cloud API consumption

- Lower monthly subscription strain

- Better long-term cost distribution

- More predictable usage patterns

Better Resource Allocation Strategy

Instead of relying entirely on cloud processing, users can divide tasks intelligently.

Typical workflow distribution:

- Simple tasks → Local LLM

- Medium tasks → Hybrid processing

- Complex reasoning → Claude Code cloud model

This structure helps maintain efficiency without sacrificing quality.

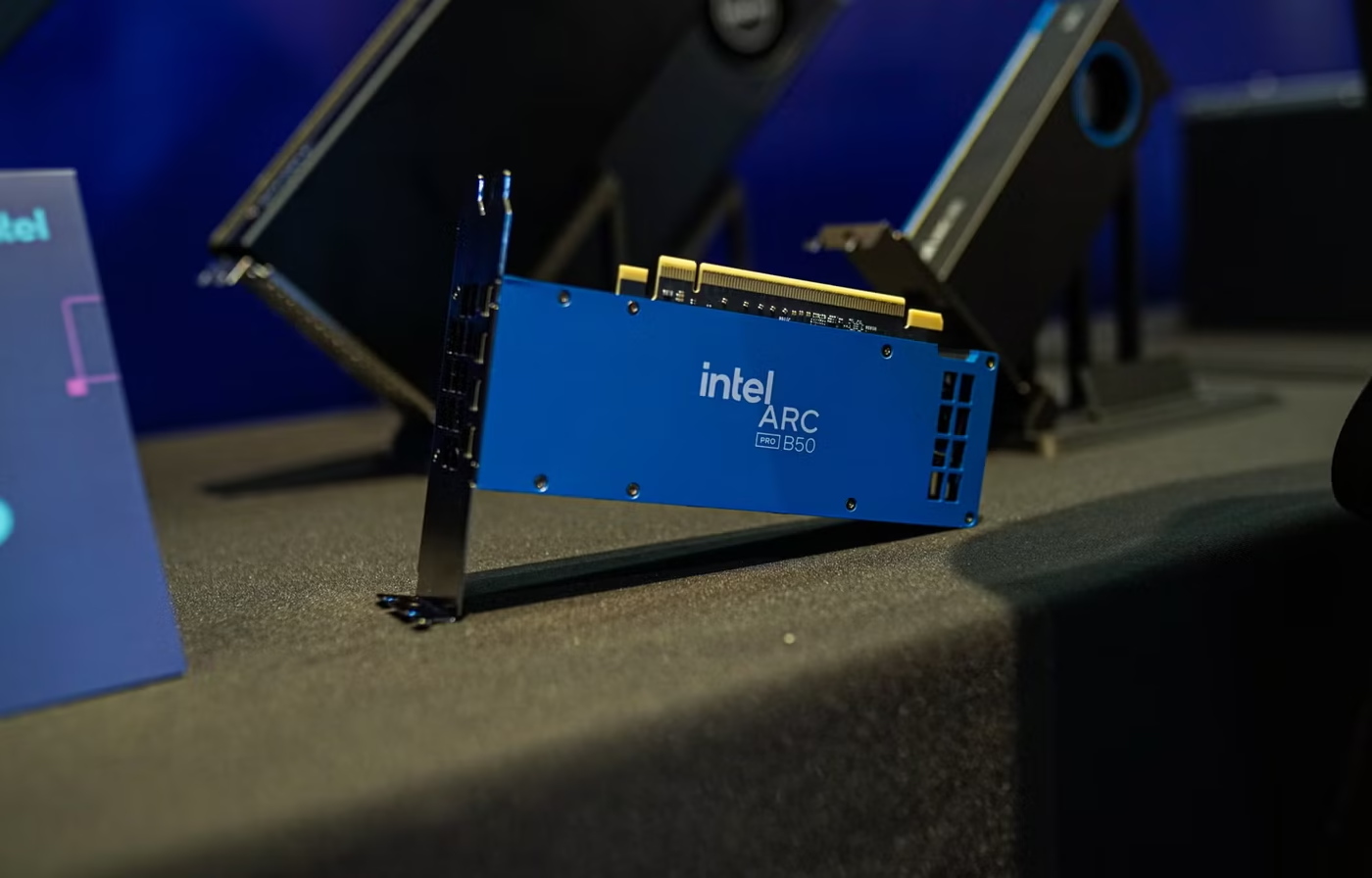

Hardware Evolution Supporting Local AI Models

Advancements in AI hardware are central to the growth of the Claude Code local LLM hybrid setup. High-performance computing systems are now more accessible than before, though still relatively expensive.

High-End AI Systems and Workstations

New AI-focused hardware platforms allow users to run larger models locally. These systems are designed to handle heavy inference workloads that were once limited to data centers.

Key features include:

- Large memory capacity for model loading

- Optimized GPU architectures

- High-speed processing for real-time inference

- Support for multiple model deployments

Cost vs Long-Term Value Consideration

While initial investment can be high, some users view it as a long-term cost-saving measure.

Factors influencing this decision include:

- Reduced dependency on cloud subscriptions

- Business expense classification advantages

- Long-term operational savings

- Increased productivity from offline capability

Limitations of Hybrid AI Systems

Despite its advantages, the Claude Code local LLM hybrid setup is not a perfect replacement for cloud-based AI systems.

Technical and Practical Constraints

Key limitations include:

- Local models still lag behind top-tier cloud models in reasoning ability

- Hardware requirements remain expensive

- Setup complexity can be high for non-technical users

- Maintenance and updates require manual effort

Dependency on Model Quality

Local LLM performance depends heavily on model selection and hardware optimization. Smaller models may struggle with tasks that cloud systems handle easily.

Future Outlook of Hybrid AI Development

The evolution of the Claude Code local LLM hybrid setup suggests that hybrid workflows may become the standard for AI-assisted development.

Expected Industry Trends

- Increased adoption of local-first AI workflows

- More efficient open-source LLMs

- Cheaper and more powerful AI hardware

- Improved integration between cloud and local tools

Potential Long-Term Impact

As both cloud and local systems improve, developers may gain more flexibility in how they manage AI workloads. This could lead to:

- Lower operational costs

- More privacy-focused AI usage

- Faster development cycles

- Reduced dependence on single providers

FAQ Section

What is a Claude Code local LLM hybrid setup?

It is a system where Claude Code is used alongside locally hosted AI models to balance performance, cost, and efficiency.

Why do developers use local LLMs with Claude Code?

They use them to reduce token usage, cut costs, and improve workflow flexibility during coding and AI tasks.

Are local LLMs as powerful as cloud models?

Not yet. Cloud models generally perform better, but local LLMs are improving and can handle many routine tasks effectively.

Is hybrid AI setup expensive to implement?

Initial hardware costs can be high, but long-term savings may offset subscription and API expenses.

Conclusion

The Claude Code local LLM hybrid setup represents a growing shift in how developers approach AI-assisted workflows. By combining cloud-based intelligence with locally hosted models, users can reduce costs, improve efficiency, and gain more control over their computing environment. While not a complete replacement for cloud AI systems, hybrid setups are becoming a practical middle ground for many developers seeking better balance between performance and resource management

Click here for more news.